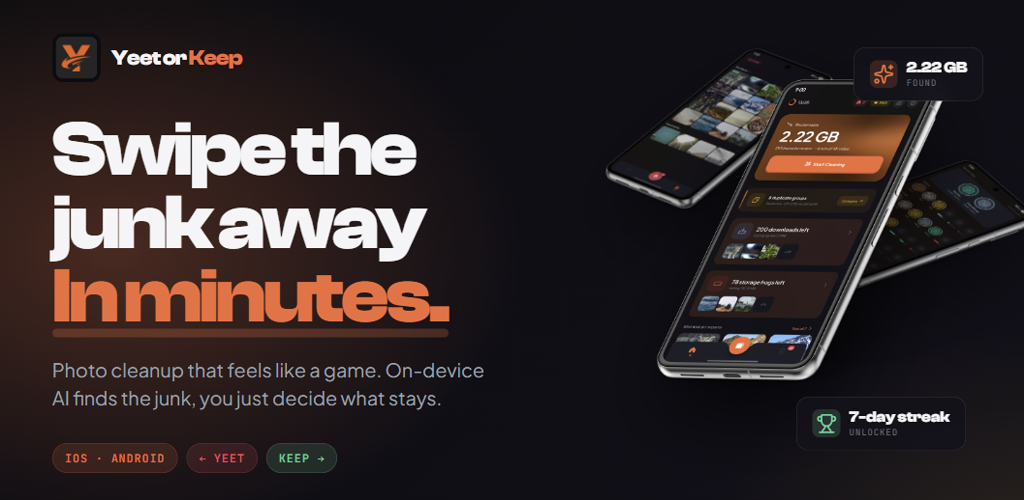

Yeet or Keep

<h2>The Problem</h2><p>The average smartphone has over 2,000 photos. Most people know half of them are junk — blurry shots, old screenshots, four versions of the same selfie — but cleaning them up means scrolling through every single one and deciding what to delete. It takes hours, so nobody does it. The photos pile up, storage fills, and the problem gets worse.</p><p>Existing solutions either upload your entire library to a cloud server for analysis (a privacy problem for something as personal as your camera roll) or just show you a grid and expect you to sort it yourself (which is the same tedious process with a different UI).</p><p>The core question I wanted to answer: <strong>can you make photo cleanup feel effortless, without ever touching a user's photos?</strong></p><h2>The Approach</h2><p>I built Yeet or Keep — a mobile app where you swipe through your photos like a card deck. Right to keep, left to yeet. The app analyzes your library on-device, surfaces the photos you're most likely to delete first, and reduces every decision to a single gesture.</p><p>Three decisions shaped the entire project:</p><p><strong>On-device only.</strong> No cloud uploads, no server, no API calls. Photos never leave the phone. This means all analysis has to run locally on the device itself, which introduced real constraints around performance and battery. But for a photo app, privacy isn't negotiable. It also means zero infrastructure costs and zero network dependency.</p><p><strong>Progressive analysis.</strong> Users want to start swiping immediately, not wait for a 10-minute scan. So the analysis pipeline delivers results in phases — the home screen populates in under 5 seconds using fast metadata, and deeper analysis (EXIF enrichment, blur detection, perceptual hashing for duplicates) runs in the background without interrupting the experience.</p><p><strong>Binary decisions.</strong> Keep or yeet. No folders, no tags, no organization system. The entire UX is designed to minimize decision fatigue. One photo, one swipe, move on.</p><h2>My Role</h2><p>Solo developer. I designed the product, built the mobile app (React Native + Expo), built the marketing website (Next.js), designed the monetization model, and handled Play Store submission. Every line of code, every design decision, every tradeoff — mine.</p><h2>The Result</h2><p>Shipped on Android via Google Play. Marketing site live at <a target="_blank" rel="noopener noreferrer" href="http://yeetorkeep.com">yeetorkeep.com</a>. Currently pre-launch — the product is built end-to-end (analysis pipeline, swipe engine, monetization, onboarding, gamification) and I'm focused on user acquisition and validating conversion assumptions before expanding to iOS.</p><p>Honest status: the engineering is complete, the business model is unproven. I built the full system before getting enough users to test it — which is itself a lesson I talk about below.</p><h2>What I Learned</h2><p><strong>I overbuilt before validating.</strong> I built 13 photo detectors, a gamification system with 56 badges, and a full monetization layer before confirming what users actually care about. In hindsight, I should have shipped with 4–5 core detectors, gotten real usage data, and expanded from there. Building depth before validating breadth is a trap, and I walked into it.</p><p><strong>Privacy constraints produced better architecture.</strong> Cutting off the cloud option forced me to solve problems I would have skipped — progressive loading so users aren't waiting, deferred persistence so background analysis doesn't stall the UI, interaction-aware scheduling so the pipeline yields to swipe gestures. The constraint made the product better, not just more private.</p><p><strong>Persistence design should come before the system that depends on it.</strong> The analysis pipeline writes thousands of results to local storage. My first version serialized the full state store on every batch, which caused visible UI stuttering. I spent a week debugging before tracing it to write volume and building a deferred flush system. Better upfront design would have prevented that entirely.</p><hr><h2>Technical Deep Dive</h2><p><em>For engineers and technical reviewers who want to see how it works under the hood.</em></p><h3>Analysis Pipeline</h3><p>The pipeline trades speed for depth across four phases:</p><p>Phase What It Does Time Why It Exists <strong>P0 — Fast Pass</strong> Bulk metadata scan (filename, media type, timestamps) ~3–5s Populates the home screen immediately <strong>P1 — Recent Enrichment</strong> Full EXIF + file size for 3 newest months. Runs blur, burst, selfie, social media, and document detectors ~10–30s Prioritizes photos users care about most <strong>P2 — Full Enrichment</strong> Same as P1 for remaining library ~1–2 min Completes all category counts <strong>P3 — Hash Pass</strong> 64-bit dHash perceptual fingerprints for duplicate detection ~5–30 min Runs silently, suspends during active swiping</p><p>Each phase is independently useful — if the user closes the app after Phase 1, they still got value from the scan.</p><p><strong>Detection approach:</strong> Metadata heuristics, not ML. Blur detection uses Laplacian variance cross-referenced with EXIF shutter speed to avoid flagging intentional long-exposure shots. Duplicate detection is two-tier — SHA-256 for byte-identical copies, dHash with Hamming distance for visual similarity (with content-type filtering to exclude flat graphics from perceptual comparison). Burst detection uses temporal clustering — 3+ photos within 3 seconds sharing dimensions.</p><p>I chose heuristics over ML for the MVP because they're free, private, and fast enough to process a full library in one session. The tradeoff is lower accuracy on edge cases. On-device ML is planned for a future version.</p><h3>Swipe Engine</h3><p>Gesture callbacks run entirely on the native thread via Reanimated worklets — the JS thread is never in the critical path during a swipe. The card stack renders 2–3 cards, with proportional rotation (max 15°), velocity-based completion, and haptic feedback at the decision threshold.</p><p>The harder problem was coexistence with the pipeline. Both systems compete for CPU time, and the swipe path cannot drop frames. The pipeline checks an <code>isInteractionActive</code> flag before processing each photo and suspends during active swiping. Stores use debounced writes (1.5s window) to prevent rapid swiping from flooding MMKV with serializations.</p><h3>The Persistence Problem</h3><p>12 Zustand stores, all persisted to MMKV. During analysis, every batch of classified photos triggers a store update → MMKV write → full-store serialization. Processing 5,000+ photos meant thousands of redundant serializations and visible UI hitching.</p><p>The fix: a <strong>persist gate</strong> that defers all MMKV writes during active scanning and flushes once per phase completion. One <code>setState({})</code> per pending store, one serialization each.</p><p>This is the part I'd redesign from scratch. The gate works, but I found the problem the hard way — built the pipeline, watched it stutter, profiled, traced it to write volume, then built the gate. The store count (12) also grew organically as I added features rather than planning the data model upfront. I'm currently consolidating stores to reduce redundancy.</p><h3>Monetization Architecture</h3><p>Ad-supported free tier with optional premium upgrade. Free users get full access to the swipe experience with interstitial ads. Premium (4.99/mo, 24.99/yr, $59.99 lifetime) removes ads and unlocks all category-specific cleanup tools. RevenueCat manages subscriptions and a 3-day trial. Fully built, not yet validated with real conversion data.</p><h3>Privacy Model</h3><p>No backend server. No cloud analysis. No account system. No PII stored anywhere except RevenueCat's receipt database (app store ID only). On-device hashing can take up to 30 minutes on large libraries where a cloud API could finish much faster — that's the real cost of the privacy constraint, and it's worth it for a photo app.</p><hr><h2>Access</h2><p><strong>Website</strong> <a target="_blank" rel="noopener noreferrer" href="http://yeetorkeep.com">yeetorkeep.com</a> <strong>Portfolio</strong> <a target="_blank" rel="noopener noreferrer" href="http://andrewjf.com">andrewjf.com</a> </p><p>Available on Android via Google Play. iOS release planned.</p>